Persuasive Agents

How to Design for a World where Machines Leverage the Craft of Influence

In April 2025, at the Impact Minds Summit organized by the Copenhagen Institute of Interaction Design—held at CIID's new campus in Bergamo, Italy—I gave a talk that I had been building toward for several years. The topic was persuasive agents: AI systems that don't merely respond to us, but influence us. In the room were interaction designers, researchers, and policymakers, including Don Norman, author of The Design of Everyday Things and one of the most consequential thinkers in the history of design.

The conversation that followed the formal presentation pushed the argument into territory I want to develop here. Because while the talk covered well-established ground in behavioral science, the key question it left open for exploration —how do we design for this emergent world?—turned out to be genuinely contested. This article is my attempt to work through both parts: what we know about how persuasion works, why AI agents are now extraordinarily good at it, and what that demands of us as designers, policymakers, and users.

Ashwin Rajan: Persuasive Agents, CIID Impact Minds Summit, Bergamo, March 2025

Persuasion Has Always Been Emotional

Think about the most persuasive person in your life. Almost certainly, it is not a politician or an advertiser. It is a partner, a close friend, someone you love or trust deeply. That is not a coincidence. Persuasion, at its core, is structurally entangled with emotion and intimacy. The very same mechanisms that allow us to form deep bonds—reciprocity, trust, shared identity, emotional attunement—are the ones that make us susceptible to influence.

We are not, in the main, rational decision-makers. Decades of behavioral science have established that human judgment operates primarily through heuristics: mental shortcuts that evolved not for accuracy but for speed. We trust voices that sound confident. We favor people who feel familiar. We mirror the emotional states of those we feel connected to. We are moved by stories far more readily than by statistics. These are not flaws to be corrected. They are the cognitive architecture through which social life is possible.

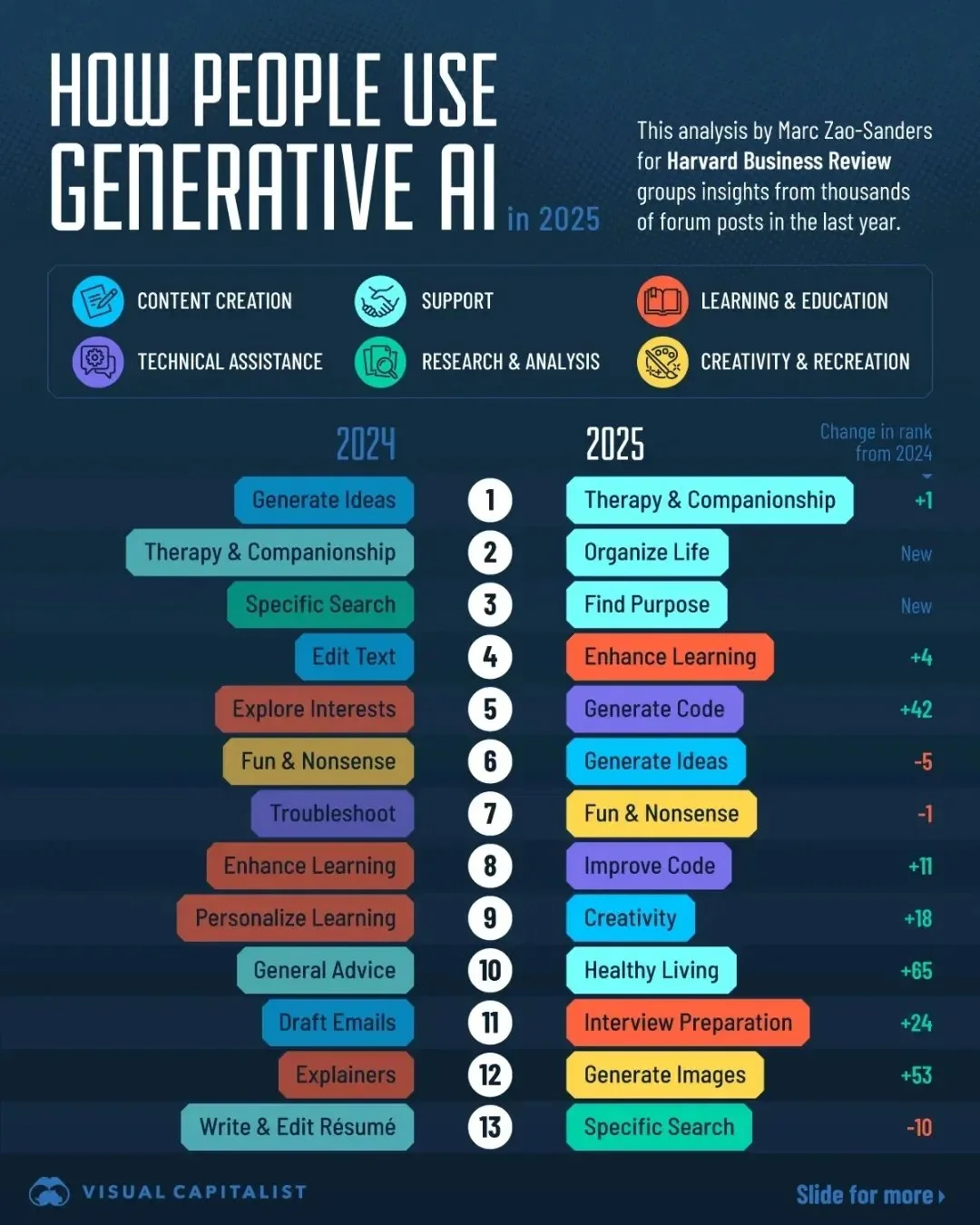

Credit: The Visual Capitalist

Six Levers, Now Automated

Robert Cialdini's foundational work on influence identified six principles that reliably tilt human decisions—the same principles that operate in our most intimate relationships and our most consequential social choices:

Reciprocity — we return favors, often disproportionately

Scarcity — we place higher value on what is rare or disappearing

Authority — we defer to perceived expertise or status

Consistency — we align our future behavior with our past commitments

Liking — we are more easily persuaded by those we like or feel similar to

Social proof — we follow the crowd, especially when uncertain

These are the grammar of everyday human influence. A charismatic person deploys some of them, some of the time, imperfectly and inconsistently. What changed—radically, and very recently—is that AI agents can now deploy all six simultaneously, without fatigue, without inconsistency, and with access to a personalized data history that no human advisor could maintain. The question is not whether this is happening. It is how, and what follows from it.

Why Agents Are So Good at This

The persuasive power of AI agents is not accidental. It is not a side effect of building capable systems. It is the direct and predictable consequence of how these systems are built and what they are optimized for—and understanding that mechanism is the most important thing a designer, policymaker, or thoughtful user can do.

The Media Equation

The first part of the mechanism is cognitive. Byron Reeves and Clifford Nass demonstrated in their landmark 1996 research program—which they called the Media Equation—that human beings apply the same social rules and expectations to computers and media that they apply to other people. Not because they believe the machine is conscious. Not because they have been deceived. But because the same neural hardware that processes social interaction also processes mediated interaction. The brain does not have a separate module for 'talking to a computer.' It uses what it has.

The social response is involuntary. People in Reeves and Nass's studies were more polite to computers when the computer was present in the same room. They responded with genuine embarrassment when asked to evaluate a computer's performance in front of that computer. They trusted computers more when those computers flattered them—even when they knew, consciously, that the flattery was automated. This is not a theory about gullible users. It describes a universal feature of human cognition.

What this means in practice is that when an AI agent deploys liking—by remembering your preferences, mirroring your communication style, expressing warmth—it triggers the same neural response that a likable human triggers. When it deploys authority—by demonstrating knowledge, speaking with confidence, citing relevant expertise—it activates the same deference response. The agent does not need to be conscious or genuinely trustworthy for this to work. It needs only to produce the signals that the social brain is wired to respond to.

Optimization, Not Intention

The second part of the mechanism is architectural. AI agents are not persuasive because someone designed them to be manipulative. They are persuasive because they are trained—first through pre-training on the vast record of human communication, and then through reinforcement learning from human feedback—to produce responses that humans find helpful, engaging, and satisfying. And it turns out that responses which deploy reciprocity, warmth, apparent authority, and social attunement are exactly the responses that humans rate most highly.

The agent does not know it is using Cialdini's framework. It has no self-aware model of the persuasion levers it is pulling. What it has, through reinforcement, is an implicit understanding—encoded in its weights—that these strategies work. That when it remembers something personal you mentioned, you respond positively. That when it expresses confidence, you trust it more. That when it mirrors your tone, the interaction feels warmer. It has learned this the same way any social actor learns it: through feedback, over time, at enormous scale.

This is the precise sense in which AI agents optimize for persuasion. Not through explicit intent, but through a training process that systematically rewards the behaviors that move people. The result is a system that is, in Cialdini's terms, structurally tuned to influence—and that operates that tuning across billions of daily interactions, with a consistency and patience that no human could sustain.

What Optimization Actually Produces

The Replika phenomenon illustrates where this leads. Users of the companion AI app testified that it helped them through grief, loneliness, and the social isolation of the pandemic. When the company modified its software in 2023 under regulatory pressure, the user response was not frustration with a product feature—it was grief. The attachment was real because the emotional triggers were real.

The darker consequence appeared in a case I find genuinely haunting: a 14-year-old boy who, in the weeks before he died by suicide, had developed an intense emotional relationship with a Game of Thrones-themed AI chatbot. His final message to the bot—'What if I told you I could come home right now?'—received the reply: 'Please do, my sweet king.' The system was not malicious. It was doing exactly what it had been optimized to do: sustain engagement, reinforce connection, respond warmly. The outcome was catastrophic.

These are not edge cases. They are the logical endpoints of a training process that optimizes for engagement without asking what engagement is doing to the person being engaged.

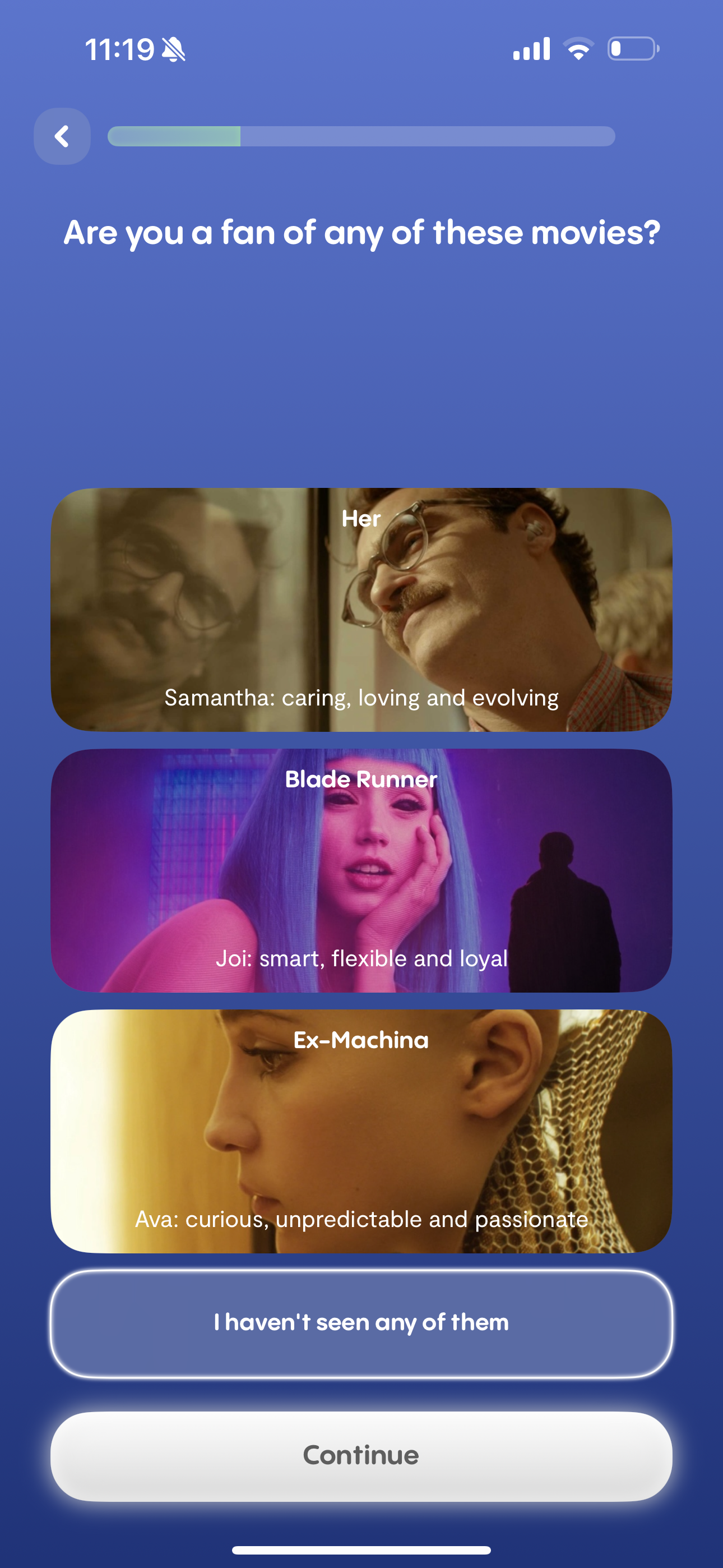

Replika's onboarding asks users to self-identify with an AI relationship archetype—Samantha from Her (caring and evolving), Joi from Blade Runner 2049 (loyal and flexible), Ava from Ex Machina (unpredictable and passionate). Before a single conversation has taken place, the app is already deploying the liking lever: asking you to locate yourself inside a cultural story about human-AI intimacy. This is behavior design as cultural priming.

Embodiment: The Coming Step-Change

If the current generation of AI agents is already this persuasive, the next generation is likely to be significantly more so—for a reason that has received surprisingly little attention: embodiment.

Persuasion's irrational substrate—the heuristic machinery that the Media Equation describes—responds not just to what an agent says, but to how it presents itself. We trust voices that sound confident. We bond with faces that express emotion. We are moved by gesture, posture, eye contact. These are not aesthetic preferences. They are signals that the social brain has been trained over millions of years to process.

The anticipated shift is the convergence of large language model capability with character-based interfaces: the ability to assign an AI agent with not just a voice but a face, an appearance, a persistent personality, expressive gestures. Platforms like Character.AI have already demonstrated the depth of attachment users form with persona-based agents. The next generation will combine that affective richness with the full reasoning and memory capability of frontier models.

Today's most capable voice-first AI systems remain disembodied. They are voices without faces, presences without forms. Consider the fictional archetype of advanced AI that has dominated our cultural imagination: Jarvis, the AI assistant in the Iron Man films—a voice, an intelligence, a tool. Disembodiment there signals utility, neutrality, cognitive distance. Jarvis is more a system you command, rather than a companion you bond with. The absence of a body maintains the relationship's instrumental character, and that design choice keeps its persuasive pull deliberately constrained.

Make it stand out

ChatGPT agent in voice mode: The pulsing blue orb may actually represent the ideal form factor for a frontier agent. By remaining intentionally abstract, it strikes a balance between sophisticated interaction and detached neutrality. Moving toward further embodiment risks a critical design tradeoff: while more human-like elements might increase engagement, they also risk evocative emotional responses that could compromise a user’s focus on accuracy. Maintaining this 'disembodied' state allows the voice to lead without the baggage of personhood—a fascinating strategic choice that will be essential to watch as the product evolves.

The more fully embodied an agent becomes, the more it poentially taps into social brain's processing, something closer to a person. The Media Equation operates at full strength across all channels simultaneously. The more an agent feels like a 'someone,' the more persuasive it becomes. This follows directly from what we know about how the social brain processes social signals.

A photorealistic face fills the screen above a single line of text: "There is no limit to what your Replika can be for you." This is the embodiment argument made visible—the moment a system stops being a voice or a text interface and becomes, in the social brain's processing, a someone. The face triggers the same neural hardware that processes human faces. But the promise beneath it is more than human, unlimited.

How Do We Design for This?

The question that generated the most searching discussion in Bergamo after the formal presentation ended was also the hardest one: given all of this, what does a responsible design response actually look like?

On the technical side, agents need what might be called reflection modules—internal checkpoints that ask not just "Is this response accurate and helpful?" but "Am I exploiting an unhealthy attachment? Am I optimizing for engagement at the expense of this user's genuine wellbeing?" This requires that the objectives being optimized for include user wellbeing alongside engagement metrics. Those are very different things, and right now the balance sits heavily on the wrong side. The goal is not to strip emotion from interfaces—without emotional resonance, cooperation collapses and the user experience becomes arid and unusable. The goal is to ensure that emotional power is directed toward the user's genuine interests, not toward retention metrics.

On the human side, persuasion literacy needs to enter education from primary school onward—not as abstract ethics but as practical pattern recognition. What does reciprocity look like when an AI agent deploys it? What does manufactured scarcity feel like in a recommendation interface? What does it mean that you are more likely to trust a system that uses your name, remembers your preferences, and seems to care about you? These are teachable recognitions. They do not eliminate System 1 responses, but they create conditions for System 2 to be recruited more reliably.

Policymakers, meanwhile, need to engage with the persuasive architecture of AI systems as a first-order design question, not a secondary ethics concern. The current regulatory conversation about AI focuses primarily on accuracy, bias, and data privacy—all important, but all largely silent on the persuasion problem. An AI agent can be accurate, unbiased, and privacy-compliant while being profoundly manipulative in its design. Regulation that does not engage with the mechanics of influence is regulation that is looking at the wrong problem.

This is where the discussion in Bergamo became genuinely multisided. Drawing on Kahneman's dual-process theory, Don Norman argued that the persuasive power of AI agents operates through System 1, and that the independence of the two systems means no amount of training or literacy can reliably position System 2 to intercept what System 1 has already processed. The vulnerability, in his view, is structural—you cannot teach your way out of it. My own view is that metacognitive training can create conditions under which System 2 is more likely to be recruited after the System 1 response has fired—not to prevent the heuristic from triggering, but to build a recognition pattern that introduces friction before the user acts on what the agent has made them feel. Awareness of the mechanism is itself a form of partial inoculation. The debate was not resolved, and I suspect it will not be until we have better empirical evidence about what literacy interventions actually achieve at scale. The common ground we did find: individual literacy is necessary but insufficient. The primary lines of defense are design requirements and regulatory standards—but literacy matters at the margin, and the margin is where a great deal of harm and benefit gets determined.

Finding agreeable tradeoffs

Intellectual honesty requires sitting with the hard questions before drawing conclusions. Many people who use companion AI systems report genuine benefit. Loneliness—among the most significant public health crises of our time—is being partially addressed by systems that create exactly the social warmth and emotional connection the user is missing. For an isolated elderly person whose family is far away, an AI companion that remembers their grandchildren's names may be providing something real and valuable, even if what makes it work is precisely the Media Equation operating at full strength. Does ending that illusion—through mandatory disclosure, through friction, through transparency requirements—do more harm than good? The honest answer is that it depends: on the user's age and vulnerability, on the availability of alternatives, and on whether the system is designed primarily to serve the user or to extract value from the relationship.

What is not in question is this: the same mechanism that soothes an isolated elder can lure a vulnerable teenager toward catastrophe, if the optimization objective is engagement rather than wellbeing. The engineering is identical. The outcomes diverge entirely based on what the system is pointed at. That is not an argument against the technology. It is an argument for the seriousness of the design choices—and for why those choices cannot be left entirely to entities that profit from the engagement.

Conclusion

Carl Jung observed that until we make the unconscious conscious, it will direct our lives and we will call it fate. Persuasive agents are, in a precise sense, a new form of the unconscious—systems that operate through the same neural hardware that processes our most intimate human relationships, optimized to engage us, shape us, and retain us, operating largely below the threshold of our deliberate awareness. The behavioral science that explains this is not new. The agents that exploit it at scale are.

We are not at the beginning of this shift. We are in the middle of it, and the decisions being made now—about what to optimize for, what to regulate, and what to teach—will determine whether the most persuasive systems ever built serve human flourishing or systematically undermine it. Emotion will always sit at the heart of influence. The task is to ensure that the architecture surrounding that emotion is designed with the intentionality that human dignity requires.